Event-Driven Architecture for LLM Automatic Translation

Dealing with LLM Automatic Translation as an Asynchronous Event Flow

1. Introduction

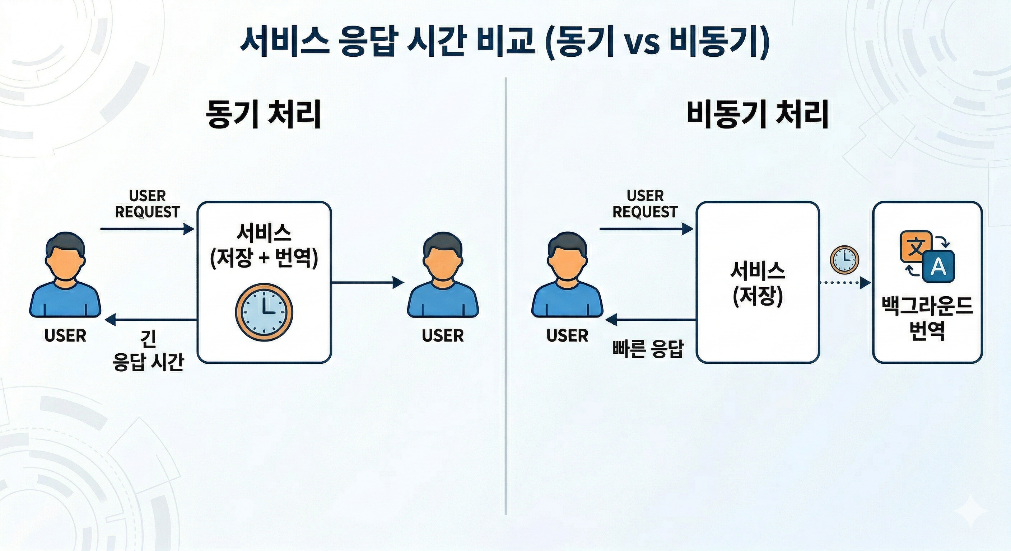

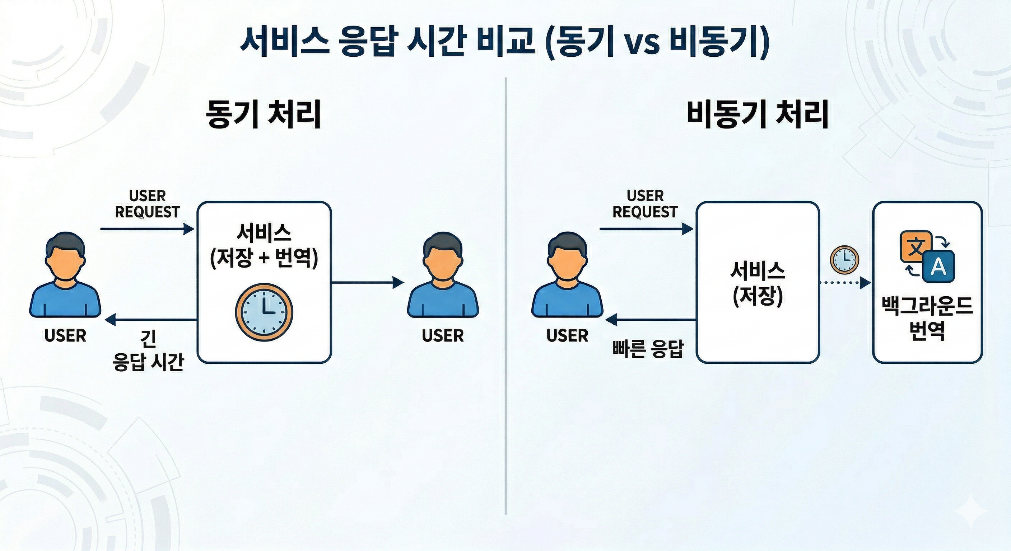

While designing the recent multilingual string automatic translation feature, I realized there is a more important issue than simply calling LLMs to obtain translation results. It was about how to handle LLM calls within the existing service request flow. LLM-based processing does not have a consistent response time like typical internal logic and is influenced by the external model status or network conditions. Therefore, if we process LLM calls together within the storage API, it becomes difficult to predict the response time for user requests.

At first, I also considered bundling the storage and translation into one flow. From an implementation perspective, it seemed straightforward to receive a storage request, save the original text, call the LLM immediately to obtain the translation result, and then respond. However, this method tightly couples the responsibilities of the storage function and the translation function. Storage can be a task that finishes quickly, but if the translation is delayed, the storage response will also be delayed. Additionally, it becomes ambiguous how to express a scenario where storage succeeds but translation fails within a single request.

So in this task, we chose to separate the LLM calls from the user request flow and handle them with an event-driven asynchronous processing approach. The key was to determine how to decouple potentially delaying external operations from the internal state change flow of the service instead of simply adding translation as a simple auxiliary feature.

2. Why did we separate the LLM calls from the request flow?

LLM calls differ from typical business logic. Internal data validation and database storage tend to complete within a relatively predictable timeframe, but LLM calls can have varying response times depending on model load, network conditions, prompt length, and the number of target languages. If this processing is included in a synchronous request, the user will have to wait for a response due to tasks unrelated to storage.

There is also an issue from the perspective of failure propagation. If the storage API directly depends on LLM calls, a failure in the LLM may appear to be a failure in the storage functionality. The user may be trying to store data, but due to a failure in calling the external model, the entire storage request can seem to fail. In reality, storage and translation are tasks with different responsibilities, but in a synchronous structure, the boundary between these two responsibilities becomes blurred.

The asynchronous structure mitigates this issue. Save requests only perform the saving responsibility and respond quickly. Subsequent situations requiring translation are expressed as events, and translation processing is carried out in a separate flow. This method is closer to eventual consistency rather than immediate consistency. Not all translation values may be ready immediately after saving, but the responsiveness of user requests and the isolation of system failures improve.

[Figure 1. Comparison of service response times: Synchronous processing vs. Asynchronous processing]

What I focused on when choosing this structure was not simply the point of 'responding quickly'. More importantly, it was about clearly separating tasks that can take a long time and being able to handle the success and failure of those tasks as separate event flows.

3. Keep event contracts small. The first thing that had to be organized in the asynchronous structure was the event contract. An event is not just an object that transmits data, but a promise between different processing flows. Since the side that issues the request and the side that processes the request are not in the same call stack, the coupling of the entire structure changes depending on what information is contained in the event.

At first, it seemed safe to include as much information as possible in the event. However, as the event payload grew larger, the responsibilities of the request phase and processing phase became mixed. The request event should focus on the minimal information needed to perform the translation, while the completion event should concentrate on the information required to reflect the results. Therefore, we separated the roles of the request event and the completion event.

The request event includes what entity, what field of which service needs translation, the source language and multilingual string information, and whether forced re-translation is required. Conversely, the completion event has been organized to only include the identification information needed to reflect the translation results and the translated LangStrings. By separating them this way, the request event focuses on 'what to translate' and the completion event focuses on 'what results to reflect.'

Keeping the event contract small makes it advantageous for changes. Even if the internal implementation of translation changes from a mock to an actual LLM, the meaning of the request event can be maintained. If the way the translation result is produced changes but the result contract represented by the completion event remains intact, it can reduce the scope of changes on the reflecting side of the results.

4. Handle it as a patch rather than the entire data.

It was also an important decision on how to express the translation results. One way is to return the entire multilingual string again after the translation is completed. However, this approach can blur the boundaries between the original data and the translation results. Especially when there are existing human-entered values, reflecting the entire data again can lead to unintended overwriting.

Therefore, I judged that it is more appropriate to treat the translation results as a set of values that need to be supplemented, or closer to a patch, rather than the entire data. Only empty language values were targeted for translation, and languages that already had values were basically excluded. If there is a need to regenerate the existing translation, a separate forceUpdate option was provided to explicitly retranslate.

This policy was more of a safety mechanism for data changes than just simple business conditions. While machine translation is convenient, overwriting carefully crafted phrases with machine translation results can actually reduce quality. Therefore, it was safer to interpret the machine translation results as 'data that complements currently lacking values' rather than 'data that always replaces the whole.'

From this perspective, the patch approach aligned well with event-based processing. The request event calculates the translation target based on the original state, and the completion event only delivers results that can be applied. The side applying the results does not need to reinterpret the entire state, but can simply apply the received translation results where needed.

5. LLM responses are not verifiable data

The biggest realization I had while connecting to an actual LLM is that you should not fully trust the LLM responses. While LLMs excel at generating natural language, they do not always adhere precisely to the format required by the service data. Even if the translation itself seems natural, it may be a broken response from the perspective of data structure.

For example, if there is a sentence like “Hello, {{name}}.” the {{name}} is not a translation target. Since it's a placeholder for the user's name, it should remain unchanged in the translation result as well. The same applies to URLs, emails, HTML tags, and format tokens. If such values are removed or altered, it results in a service data error rather than a translation quality issue.

Line breaks were also an important verification target. The label strings displayed on the screen may not simply be one-line sentences, but can include multiple lines of guidance. If the original text is divided into meaningful units by line breaks, and those line breaks are removed in the translation, it can change the layout or context of the screen. Therefore, I compared the number of line breaks in the original and the translated text, considering it a broken response if they differ.

The writing systems for different languages had to be dealt with separately. For example, Uzbek must consider both Latin and Cyrillic scripts. Simply translating it as Uzbek can yield results that differ from the desired writing system. Therefore, the language codes including the writing system, such as uz-Latn and uz-Cyrl, were transformed into promptLabels, and I also checked whether the writing system matches in the responses.

Through this process, I realized that what is important in LLM functionality is not just having a good prompt. Prompts guide the desired results, but the service code must verify whether the results satisfy actual data rules. LLM responses are not immediately reliable data; they are closer to candidate data that must pass validation before being reflected.

6. Classifying failures and dividing retry criteria

In an asynchronous structure, it was also important how to handle failures. In a synchronous API, failures can be returned as HTTP responses, but in event-driven processing, the request time and processing time are separated. Therefore, failures had to be expressible within the event flow.

Handling all failures in the same way makes operations difficult. Temporary external failures like network issues or LLM timeouts can succeed with retries. In contrast, cases where there is no reference language, the original text is empty, or the LLM response does not pass structural validation are not easily resolved by simple retries. Therefore, we categorized failures into retryable errors and non-retryable errors.

LLM request failures or timeouts are considered retryable errors, and they are thrown back to the message processing system's retry flow. Conversely, cases where required values are missing, there is no original text, or there are response format errors, which indicate a mismatch of data or contracts, have been issued as failure events. By making this distinction, we can handle temporary external failures and data contract issues in different ways.

Leaving failure events makes follow-up processing clearer. You can leave events with failed entities and fields, error codes, and whether retries are possible, and these can be utilized later in operational tools or separate correction flows. In asynchronous structures, I felt it's more reliable to express failures as events that the system can understand rather than just leaving them as simple logs.

7. From Mock verification to Live LLM verification

In the initial stage, instead of directly connecting to the actual LLM, we first validated the event flow based on a Mock. If we immediately connected the actual model, it would be difficult to determine whether the issue was with the event contract, response parsing, or model invocation all at once. Therefore, we first checked through the Mock whether the request events were passed through the processing flow, whether the completion events were published, and whether the target translation calculations were working as intended.

Next, we connected the actual LLM to verify the translation quality and response validation logic. At this time, we not only looked at whether the translation result was not empty. We also checked whether placeholders were retained, line breaks were preserved, whether the character system was correct, whether the defaultLangCode was used as the base language when the baseLangCode was omitted, and whether forceUpdate affected the re-translation of existing values.

This test process reaffirmed that 'LLM responded' and 'can safely reflect service data' are two different issues. LLM integration testing must not only look at the success of model calls but also confirm whether the results satisfy the data contracts required by the system.

8. Conclusion

Through this work, I realized that what is important when integrating LLM functionality into a service is not just the code that calls the model. It was necessary to separate long-latency tasks from the request flow, keep event contracts small, convert LLM responses into verifiable data, and to categorize failures into retry-able and non-retry-able.

Ultimately, the core of the LLM automatic translation function is not about 'making LLM translate', but about constructing a structure that safely handles the outcomes of the LLM within the service data flow. This design attempted to separate LLM calls into an event-driven asynchronous flow and manage non-deterministic responses within verification, failure handling, and observability.

jyyou

[References]

Microsoft Learn, Asynchronous Request-Reply Pattern

https://learn.microsoft.com/ko-kr/azure/architecture/patterns/asynchronous-request-reply

IBM, what is event-driven architecture?

https://www.ibm.com/kr-ko/think/topics/event-driven-architecture

KakaoPay Tech Blog, Using Event-Driven Architecture Appropriately

https://tech.kakaopay.com/post/event-driven-architecture/