1. Introduction

The biggest piece of user feedback we encountered while participating in the development of a GitOps-based deployment orchestration service called Qra ([Qurator], one of the services within the Vizend platform that automatically deploys and manages subscribed applications), was: “I have no idea where my deployment currently is in the process.”

Qra’s deployment pipeline goes through the following stages:

Request Validation → Deployment Preparation → GitOps Push → ArgoCD Sync → Pod Stabilization → Completion Notification

Each stage must complete successfully before the next stage begins, and the overall process can take anywhere from several seconds to several minutes. However, the existing system could not show intermediate progress to users until the final result was produced. Users were left feeling as if they were standing in front of an elevator without a floor indicator, forced to wait without knowing what was happening. We needed a way to project the internal state of the pipeline in real time.

2. Why SSE (Server-Sent Events)?

| Category | SSE | WebSocket | Polling |

|---|---|---|---|

| Data Direction | One-way (Server → Client) | Bidirectional | One-way (Client → Server) |

| Reconnection | Automatically handled by EventSource | Requires manual implementation | Not applicable (each request is independent) |

| Infrastructure Compatibility | Standard HTTP | Requires Upgrade header handling | Standard HTTP |

| Implementation Complexity | Low | Medium to High | Low |

| Real-time Capability | High (immediate on event occurrence) | High (immediate on event occurrence) | Depends on polling interval |

| Server Resource Usage | Maintains emitter per connection | Maintains session per connection | Released immediately after request-response |

We evaluated three options for real-time data delivery.

The essence of deployment monitoring is a one-way propagation of state changes occurring on the server to the client. There was no need to increase implementation complexity by introducing bidirectional communication through WebSocket. Additionally, SSE uses standard HTTP, making infrastructure configuration much simpler, and browsers automatically attempt reconnection when temporary network disconnections occur. Given the requirements at the time, SSE appeared to be the most economical yet powerful solution.

3. SSE Architecture Design

3.1. Separation of Business Logic and Transport Infrastructure

We tried to uphold the principle that:

“Business logic should not be aware of the existence of the transport channel.”

If the Feature layer responsible for the deployment pipeline directly handled SseEmitter, coupling between components would increase, making future changes difficult. To solve this, we used Spring’s ApplicationEventPublisher as a bridge.

- Feature Layer

Only publishes events such as “stage completed” or “status changed.” - Facade Layer

Subscribes to events, records them in the database (TimelineFlow.record), and simultaneously transmits them through SSE (sseRegistry.broadcast).

Thanks to this event-driven structure, the deployment logic did not need to care about what transport mechanism was being used. This later became the decisive foundation that allowed us to remove SSE with minimal code changes.

3.2. Channel Strategy Through Separation of Concerns

The scope of information required differed by user. Some users wanted to monitor the detailed progress of a specific deployment, while operators wanted to oversee the deployment status board for the entire application.

To support this, we introduced a management object called SseRegistry, internally using ConcurrentHashMap to separate channels. By segmenting keys using either deploymentId or pavilionId, only relevant events were sent to targeted clients, preventing unnecessary network traffic.

3.3. Defensive Mechanisms Against Resource Exhaustion

SSE has a stateful nature because server resources remain occupied while the connection is maintained. If left unmanaged, zombie connections could accumulate, eventually exhausting server memory and thread pools.

To prevent this, we introduced three defensive mechanisms:

- Connection Limits

Connections were limited to 10 per key (deployment/pavilion) and 100 globally across the server. If the limit was exceeded, the server returned429 Too Many Requests, and the client automatically switched to Polling mode to preserve availability. - Immediate Resource Cleanup

We registeredonCompletion,onTimeout, andonErrorcallbacks so that connections would be immediately removed from the registry regardless of how they terminated. - Active Heartbeat Detection

Empty data was periodically sent every 25 seconds to detect and remove “ghost connections” where the client terminated abnormally without properly sending a TCP FIN packet.

4. The Wall of Horizontal Scaling (Scale-out)

In a single-instance environment, SSE worked exactly as intended. However, limitations became apparent in Kubernetes-based horizontally scaled environments.

SSE connections are bound to the memory of a specific server instance. Meanwhile, deployment pipelines may execute on any instance depending on Kafka consumer groups or load balancing. If the instance where the client is connected differs from the instance where the event occurs, the event disappears, and the user receives no updates.

To solve this issue, we reviewed several infrastructures already used internally.

A. Redis Pub/Sub

The first option we considered was Redis.

The idea was that all instances would subscribe to Redis channels, and whenever an event occurred, Redis would distribute the message to every instance. This pattern is widely used as a standard approach in many real-time systems.

However, our shared internal Redis environment was primarily operated for caching purposes, which meant we needed additional operational policies regarding the message-loss characteristics of Pub/Sub (messages disappear immediately if there are no subscribers). Furthermore, because Qra needed to be flexibly deployable across various customer environments, we questioned whether making Redis a mandatory dependency was the right architectural decision.

B. Kafka

Qra was already actively using Kafka for communication with the Gallery service, so we also considered using Kafka for SSE fan-out.

However, after evaluation, Kafka turned out to be too heavy and philosophically mismatched for this purpose.

- Conflict with the Consumer Group Model

Kafka fundamentally follows a competitive consumption model where “one message is processed by one consumer.” To ensure all instances received the same event, each instance would require its own Consumer Group. - Lifecycle Management in Dynamic Environments

In Kubernetes, Pods (instances) are constantly created and destroyed. Assigning unique Group IDs per instance (e.g.,qra-group-pod-uuid) would create operational debt because unused Group IDs and offset metadata would need to be continuously cleaned up whenever Pods disappeared. This was fundamentally far from Kafka’s original design philosophy of large-scale log processing and persistence. - Durability vs. Volatility

Kafka guarantees durable message delivery through disk storage and offset management. SSE events, however, are volatile data: “If the event is not delivered to the currently connected user, it loses its meaning.” Performing disk I/O and managing complex offsets solely for momentary UI updates felt close to over-engineering.

5. Returning to Incremental Polling

We returned to the original question:

“Does deployment monitoring truly require second-level real-time push updates?”

Unlike stock trading or chat systems, deployment is not a domain where millisecond-level responsiveness is mission-critical. We concluded that delays of 5–10 seconds would not critically harm user experience.

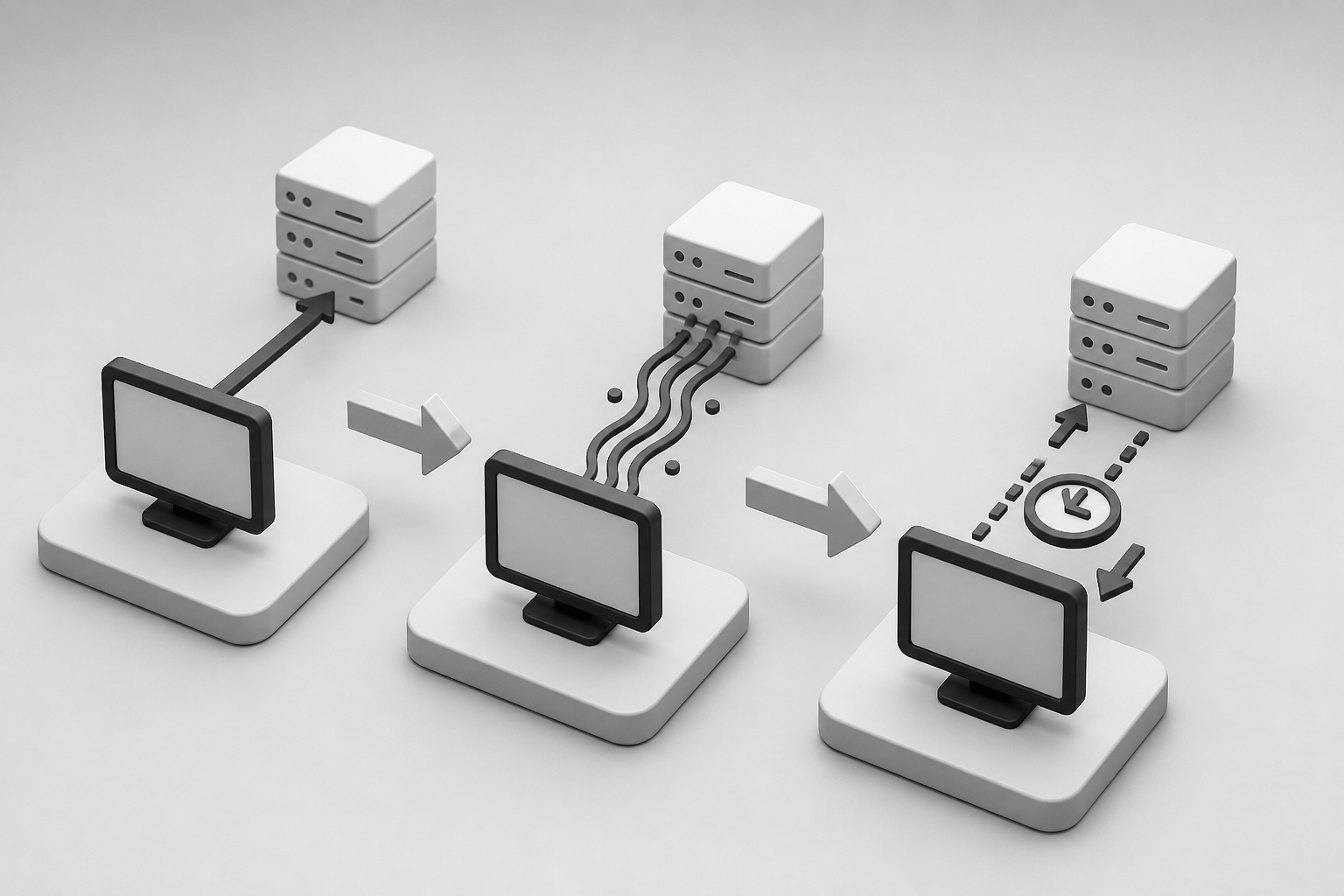

Ultimately, we removed SSE and adopted a cursor-based incremental polling approach.

Simple polling is inefficient because it requests the entire dataset every time. To optimize this, we applied the following pattern:

- After the initial request, the client receives the server response timestamp (

queryTime). - From subsequent requests onward, the client sends

after = queryTime. - The server responds only with delta data generated after that timestamp.

This approach significantly reduced server load while maintaining a level of perceived freshness that was practically indistinguishable from SSE for users.

6. Conclusion and Lessons Learned: Removing Things Is Also Engineering

The process of introducing SSE and eventually removing it was not merely a technical trial-and-error experience. It became a major architectural lesson.

First, abstraction of the transport channel is a lifeline. Because we strictly separated business logic from SSE transport logic early in the architecture, we were able to remove approximately 2,000 lines of SSE-related code without modifying a single line of core domain logic.

Second, the real value of real-time capability must be measured carefully. Rather than becoming obsessed with technically flashy solutions such as SSE or WebSocket, we must objectively evaluate domain characteristics and cost-effectiveness. Sometimes, the simplest solution is the most robust one.

Third, the debt of stateful components must be treated with caution. In modern infrastructures where distributed stateless instances are the norm, introducing technologies that store state on the server requires consideration not only of the feature itself, but also of the cost of additional stateful infrastructure (such as Redis) needed for synchronization.

Removing a feature that once worked successfully can feel disappointing. However, lowering system complexity and removing obstacles to horizontal scalability in order to build a simpler and more resilient system ultimately proved to be the true essence of engineering.